Deploy Services and Configure Gateway Rules

The User Experience changes little for the Application Owner, whether they interact directly with the Kubernetes platform using native Kubernetes APIs, or whether they rely on an indirect method to deploy and configure the platform (such as a CD pipeline).

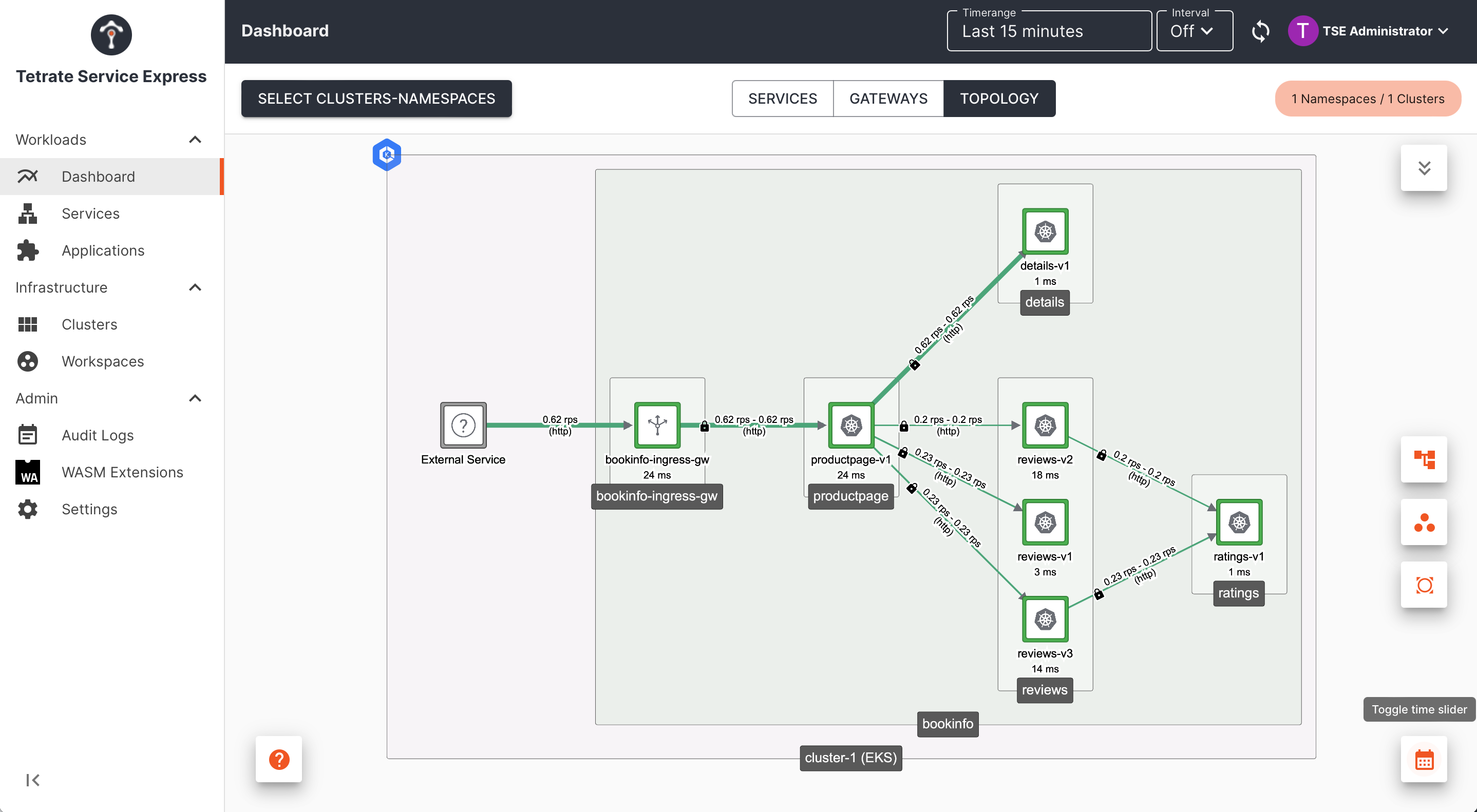

The Application Owner ("Apps") will deploy and expose a service as follows:

Deploy the Service

Deploy the intended services in the Kubernetes namespace(s) provided by the Platform Owner

Expose the Service

Configure a Gateway resource to expose the service through the local Ingress Gateway

Apps: Before you begin

Before you begin, you will need the following information from your administrator (the Platform Owner):

- Kubernetes clusters and namespaces: the necessary API access to deploy and manage services within the chosen namespaces

- Tetrate Topology: Kubernetes namespaces are grouped into Tetrate Workspaces, and a Workspace can include namespaces from several clusters. You'll need to know the topology, including:

- The name of the Tetrate Workspace

- The namespaces and clusters in that Workspace

- The names of the Tetrate Gateway Groups - typically each Workspace has one Gateway Group per cluster

Note that the most common security posture for Tetrate deployments is to allow traffic within a Workspace, and deny all traffic between workspaces and between mesh services that are not in a workspace. You administrator can open up additional flows between workspaces if needed.

Apps: Deploy the Service

Deploy your service into the kubernetes namespaces provided by the Platform Owner, for example:

kubectl apply -n bookinfo -f https://raw.githubusercontent.com/istio/istio/master/samples/bookinfo/platform/kube/bookinfo.yaml

Namespaces that are managed by Tetrate will have Istio Injection enabled. You can verify this with kubectl describe ns <namespace>, and looking for the label istio-injection=enabled. Pods that you deploy will have an additional istio-proxy container at runtime, as well as several transient init containers.

Apps: Expose the Service

Expose a service using a Gateway resource. This will configure the Ingress Gateway to load-balance traffic to your service:

Exposing a service through an Ingress Gateway Exposing a service through an Ingress Gateway |

|---|

The Gateway resource references the following resources, which should be provided by your administrator (the Platform Owner)

- Tetrate Organization tse, Tenant tse, Workspace bookinfo-ws and Gateway Group bookinfo-gwgroup-1

- Ingress Gateway, located in bookinfo/bookinfo-ingress-gw

The highlighted lines contain the names of these resources:

cat <<EOF > bookinfo-gateway.yaml

apiVersion: gateway.tsb.tetrate.io/v2

kind: Gateway

metadata:

name: bookinfo-gateway

annotations:

tsb.tetrate.io/organization: tse

tsb.tetrate.io/tenant: tse

tsb.tetrate.io/workspace: bookinfo-ws

tsb.tetrate.io/gatewayGroup: bookinfo-gwgroup-1

spec:

workloadSelector:

namespace: bookinfo

labels:

app: bookinfo-ingress-gw

http:

- name: bookinfo

port: 80

hostname: bookinfo.tse.tetratelabs.io

routing:

rules:

- route:

serviceDestination:

host: bookinfo/productpage.bookinfo.svc.cluster.local

port: 9080

EOF

kubectl apply -f bookinfo-gateway.yaml

This configuration is instantiated on the selected Ingress Gateway. An Ingress Gateway is an envoy-based proxy that listens for incoming traffic (in this case, http traffic on port 80). Traffic with the host header bookinfo.tse.tetratelabs.io is then routed to your service bookinfo/productpage.bookinfo.svc.cluster.local, on port 9080.

Understanding Tetrate Gateways

Behind the scenes, the Tetrate Gateway object you applied will be processed by the Tetrate Management Plane in Tetrate's configuration hierarchy, and then used to create one or more Istio gateway objects:

kubectl get gateway -n bookinfo bookinfo-gateway -o yaml

The gateway object contains a selector which identifies the Ingress Gateway (an Envoy proxy pod) that should instantiate this configuration.

Tetrate Parameters

If you get any of the Tetrate parameters incorrect, the apply operation will be denied. You will receive an error message similar to:

Error from server: error when creating "bookinfo-gateway.yaml": admission webhook "gitops.tsb.tetrate.io" denied the request: computing an access decision: checking permissions [organizations/tse/serviceaccounts/auto-cluster-cluster-1#exHFFwuGQSCPQP941C4ilMZFWwmO4lJoTyQXKjAPHXk ([CreateGateway]) organizations/tse/tenants/tse/workspaces/bookinfo-ws/gatewaygroups/bookinfo-gwgroup]: target "organizations/tse/tenants/tse/workspaces/bookinfo-ws/gatewaygroups/bookinfo-gwgroup" does not exist: node not found

These four parameters (organization, tenant, workspace and gatewayGroup) correspond to Tetrate configuration created by the administrator (Platform Owner), and they define the location in the Tetrate configuration hierarchy where your Ingress resource should be located.

Workload Selector

The workloadSelector stanza identifies the Ingress Gateway (proxy) on which this configuration should be applied. If any of these parameters are incorrect (they don't match an Ingress Controller), the configuration will not be applied.

In the example above, you can find the Ingress Gateway as follows:

kubectl get pods -n bookinfo -l=app=bookinfo-ingress-gw

Inspecting the Ingress Gateway

The Ingress Gateway pod is deployed in one of the namespaces that are managed by your assigned Tetrate Workspace:

kubectl get ingressgateway -A

Inspect the Ingress Gateway configuration, and view the pod hosting the Envoy proxy:

kubectl describe ingressgateway -n bookinfo bookinfo-ingress-gw

kubectl describe pod -n bookinfo -l app=bookinfo-ingress-gw

Follow the logs, including real-time access logs:

kubectl logs -n bookinfo -l app=bookinfo-ingress-gw

Route 53 Synchronization

If the Administrator has configured AWS Route 53 synchronization, then the Tetrate integration will also automatically provision a Route 53 DNS name based on the hostname in your Gateway resource.

If you have permissions to access the istio-system namespace, you can follow the logs from Tetrate's AWS Controller:

kubectl logs -n istio-system -l app=aws-controller

Accessing the Service through the Ingress Gateway

If DNS is configured for you, you should be able to access the service directly:

curl http://bookinfo.tse.tetratelabs.io

You can also determine the public address (IP or FQDN) of the Ingress Gateway and send traffic directly:

export GATEWAY_IP=$(kubectl -n bookinfo get service bookinfo-ingress-gw -o jsonpath="{.status.loadBalancer.ingress[0]['hostname','ip']}")

curl -s --connect-to bookinfo.tse.tetratelabs.io:80:$GATEWAY_IP \

"http://bookinfo.tse.tetratelabs.io/productpage"