Key Metrics

Tetrate Service Bridge collects a large number of metrics. This page is generated from dashboards ran internally at Tetrate and will be updated periodically based on best practices learned from operational experiences in Tetrate and from user deployments. Each heading represents a different dashboard, and each sub-heading is a panel on this dashboard. For this reason, you may see metrics appear multiple times.

The metrics in this document refer to TSB components, so be sure to check the TSB architecture to get a good understanding of each component and its function.

The list of available dashboards can be obtained with the tctl experimental grafana dashboard command. To download a dashboard in JSON format to upload it to Grafana, you can run the command as follows:

tctl experimental grafana dashboard <dashboard file name> -o json

You can also upload de dashboards directly to your Grafana instance using the tctl experimental grafana upload command.

Control Plane Operator metrics

Dashboard to show the status of control plane operator metrics.

Control Plane Mode

This panel represents the control plane mode configured in the control plane cluster.

Possible values are:

- CONTROL(Default)

- OBSERVE

| Metric Name | Labels | PromQL Expression |

|---|---|---|

control_plane_mode | N/A | control_plane_mode == 1 |

Closest Token To Expire

The 10 clusters that have a token that will expire the soonest.

Tokens are rotated halfway through their validity period. By default, tokens are valid for one hour, so they are rotated 30 minutes after creation. If a cluster's time is displayed in red or with a negative value, it indicates that at least one token has expired without being rotated. In such cases, please review the "Token Rotations Execution Failed" chart to check for any failed token rotation attempts.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

token_expiration_timestamp | cluster_name | max(max_over_time(token_expiration_timestamp{cluster_name=~"${cluster}"}[1m])) by (cluster_name) - time() |

Clusters Not Validating Tokens

Each cluster should validate all its token every 60 seconds. If a cluster shows up it means that the last time it validated the tokens and reported the result is over 120 seconds ago.

This doesn't necessarily mean that any token is invalid or is not being rotated properly. For example, the metrics may not have been reported correctly or in a timely manner, or that there is a problem with the TSB Control Plane operator.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

last_tokens_validation_timestamp | cluster_name | max(time() - max_over_time(last_tokens_validation_timestamp{cluster_name=~"${cluster}"}[1m])) by (cluster_name) |

Valid Tokens

Number of valid control plane tokens.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

valid_tokens | cluster_name | max(max_over_time(valid_tokens{cluster_name=~"${cluster}"}[1m])) by (cluster_name) |

Token Rotation Executions

Number of token rotation executions. An execution doesn't necessarily mean than a token has been rotated as they might still be valid.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

token_rotation_executions_count_total | cluster_name | sum(increase(token_rotation_executions_count_total{cluster_name=~"${cluster}"}[1m])) by(cluster_name) |

Token Rotation Execution Failed

Number of failed token rotation executions. If there are errors in this chart it means that the token could not be rotated. Please check the TSB Control Plane operator logs to find the cause. Use the following command: kubectl logs -n istio-system -l name=tsb-operator --tail=-1 | grep "token rotation failed, retrying in"

| Metric Name | Labels | PromQL Expression |

|---|---|---|

token_rotation_executions_count_total | cluster_name status | sum(increase(token_rotation_executions_count_total{cluster_name=~"${cluster}", status="failed"}[5m])) by(cluster_name) |

Tokens Exceeded Rotation Time

Number of control plane tokens that exceeded their rotation time.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

token_exceeded_rotation_time | cluster_name | max(max_over_time(token_exceeded_rotation_time{cluster_name=~"${cluster}"}[1m])) by (cluster_name) |

Tokens Rotated Successfully.

Number of tokens that have been rotated successfully. If the values are 0 it might mean that the token rotation execution is failing or that TSB Control Plane operator is not even running.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

token_rotations_count_total | cluster_name status | sum(increase(token_rotations_count_total{cluster_name=~"${cluster}", status="success"}[1m])) by(cluster_name) |

Failed Tokens to Rotate.

Number of tokens that have been could not be rotated.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

token_rotations_count_total | cluster_name status | sum(increase(token_rotations_count_total{cluster_name=~"${cluster}", status="failed"}[1m])) by(cluster_name) |

Tokens Exceeded Rotation Timeline

Number of control plane tokens that exceeded their rotation time.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

token_exceeded_rotation_time | cluster_name | max(max_over_time(token_exceeded_rotation_time{cluster_name=~"${cluster}"}[1m])) by (cluster_name) |

Valid Tokens Timeline

Number of valid control plane tokens.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

valid_tokens | cluster_name | sum(valid_tokens{cluster_name=~"${cluster}"}) by (cluster_name) |

Invalid Tokens

Number of invalid control plane tokens grouped by the reason.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

invalid_tokens | cluster_name | sum by (reason) (invalid_tokens{cluster_name=~"$cluster"}) |

token_missing_rotate_at_annotation | cluster_name | sum by (name) (token_missing_rotate_at_annotation{cluster_name=~"$cluster"}) |

Embedded Postgres

Postgres Scrape Status

Shows status of the metrics colleciton process

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pg_exporter_last_scrape_error | N/A | sum(pg_exporter_last_scrape_error) |

Postgres Scrape Status

Shows status of the metrics colleciton process

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pg_exporter_last_scrape_error | N/A | max(pg_exporter_last_scrape_error) by (role) |

Postgres UP

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pg_up | N/A | max by(role) (pg_up) |

Kubegres Reconciliation Health

Monitors the health of the Kubegres controller by tracking reconciliation success rate, errors, and total reconciliations. This is the most critical dashboard for understanding if the operator is functioning correctly.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

controller_runtime_reconcile_total | component | sum by(result) (rate(controller_runtime_reconcile_total{component="kubegres"}[1m])) |

Kubegres Reconciliation Latency P95

Tracks how long reconciliation loops take to complete. High latency may indicate issues with resources, API server slowness, or complex state changes. Use this to identify performance degradation.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

controller_runtime_reconcile_time_seconds_bucket | component | sum by(le) (histogram_quantile(0.95, rate(controller_runtime_reconcile_time_seconds_bucket{component="kubegres"}[1m]))) |

Kubegres Work Queue Status

Monitors the controller's work queue to detect backpressure, retries, and processing delays. A growing queue depth or high retry count indicates the controller is struggling to keep up with changes.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

workqueue_depth | component | max by(cluster_name) (workqueue_depth{component="kubegres"}) |

workqueue_retries_total | component | max by(cluster_name) (rate(workqueue_retries_total{component="kubegres"}[1m])) |

Current Replication Lag

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pg_replication_lag_seconds | N/A | max by(role) (pg_replication_lag_seconds) |

Max Replication Lag [s]

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pg_replication_lag_seconds | N/A | max by(role) (pg_replication_lag_seconds) |

Active Replication Slots

Shows the number of active replication slots per cluster. Having an inactive replication slot for an extended period can cause WAL files to accumulate and fill up the database filesystem. Expect: Number of replication slots to be equal number of running replicas. If replication slots are disabled expect: No Data.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pg_replication_slots_active | N/A | count by (cluster_name) (pg_replication_slots_active==1) |

Inactive Replication Slots

Shows the number of inactive replication slots per cluster. Having an inactive replication slot for an extended period can cause WAL files to accumulate and fill up the database filesystem. Expect: No Data in the common scenario, but expect some entries for a short time in case of replicas restart or scale up/down.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pg_replication_slots_active | N/A | count by (cluster_name) (pg_replication_slots_active==0) |

Connections used

Percentage of max_connections used

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pg_settings_max_connections | N/A | sum(pg_stat_database_numbackends)/max(pg_settings_max_connections) |

pg_stat_database_numbackends | N/A | sum(pg_stat_database_numbackends)/max(pg_settings_max_connections) |

Connections used

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pg_settings_max_connections | N/A | 100*sum(pg_stat_database_numbackends)/max(pg_settings_max_connections) |

pg_stat_database_numbackends | N/A | 100*sum(pg_stat_database_numbackends)/max(pg_settings_max_connections) |

Failed WAL archiving attempts

Should be 0

Source: pg_stat_database

With log_lock_waits turned on, deadlocks will be logged to the PostgreSQL Logfiles.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pg_stat_archiver_failed_count_total | role | max by(role) (pg_stat_archiver_failed_count_total{role="primary"}) |

DBs Size

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pg_database_size_bytes | role | max by(datname) (pg_database_size_bytes{role="primary"}) |

Table Size

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pg_stat_user_tables_index_size_bytes | role | max((pg_stat_user_tables_table_size_bytes{role="primary"}+pg_stat_user_tables_index_size_bytes{role="primary"}) or pg_stat_user_tables_size_bytes{role="primary"}) by (relname) |

pg_stat_user_tables_size_bytes | role | max((pg_stat_user_tables_table_size_bytes{role="primary"}+pg_stat_user_tables_index_size_bytes{role="primary"}) or pg_stat_user_tables_size_bytes{role="primary"}) by (relname) |

pg_stat_user_tables_table_size_bytes | role | max((pg_stat_user_tables_table_size_bytes{role="primary"}+pg_stat_user_tables_index_size_bytes{role="primary"}) or pg_stat_user_tables_size_bytes{role="primary"}) by (relname) |

Checkpoint sync time

Total amount of time that has been spent in the portion of checkpoint processing where files are synchronized to disk, in milliseconds

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pg_stat_bgwriter_checkpoint_sync_time_total | role | max(rate(pg_stat_bgwriter_checkpoint_sync_time_total{role="primary"}[1m])) by(role, index) |

WAL segments size

Total size of WAL segments

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pg_wal_size_bytes | role | max(pg_wal_size_bytes{role="primary"}) by (role) |

pg_wal_size_bytes | role | max by(role) (pg_wal_size_bytes{role="replica"}) |

Buffer hits percentage by table

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pg_statio_user_tables_heap_blocks_hit_total | role | max(100 * ( rate(pg_statio_user_tables_heap_blocks_hit_total{role="primary"}[1m]) / ( rate(pg_statio_user_tables_heap_blocks_hit_total{role="primary"}[1m]) + rate(pg_statio_user_tables_heap_blocks_read_total{role="primary"}[1m]) ) )) by (relname) |

pg_statio_user_tables_heap_blocks_read_total | role | max(100 * ( rate(pg_statio_user_tables_heap_blocks_hit_total{role="primary"}[1m]) / ( rate(pg_statio_user_tables_heap_blocks_hit_total{role="primary"}[1m]) + rate(pg_statio_user_tables_heap_blocks_read_total{role="primary"}[1m]) ) )) by (relname) |

Disk block reads by table

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pg_statio_user_tables_heap_blocks_read_total | role | avg(rate(pg_statio_user_tables_heap_blocks_read_total{role="primary"}[1m])) by(relname) |

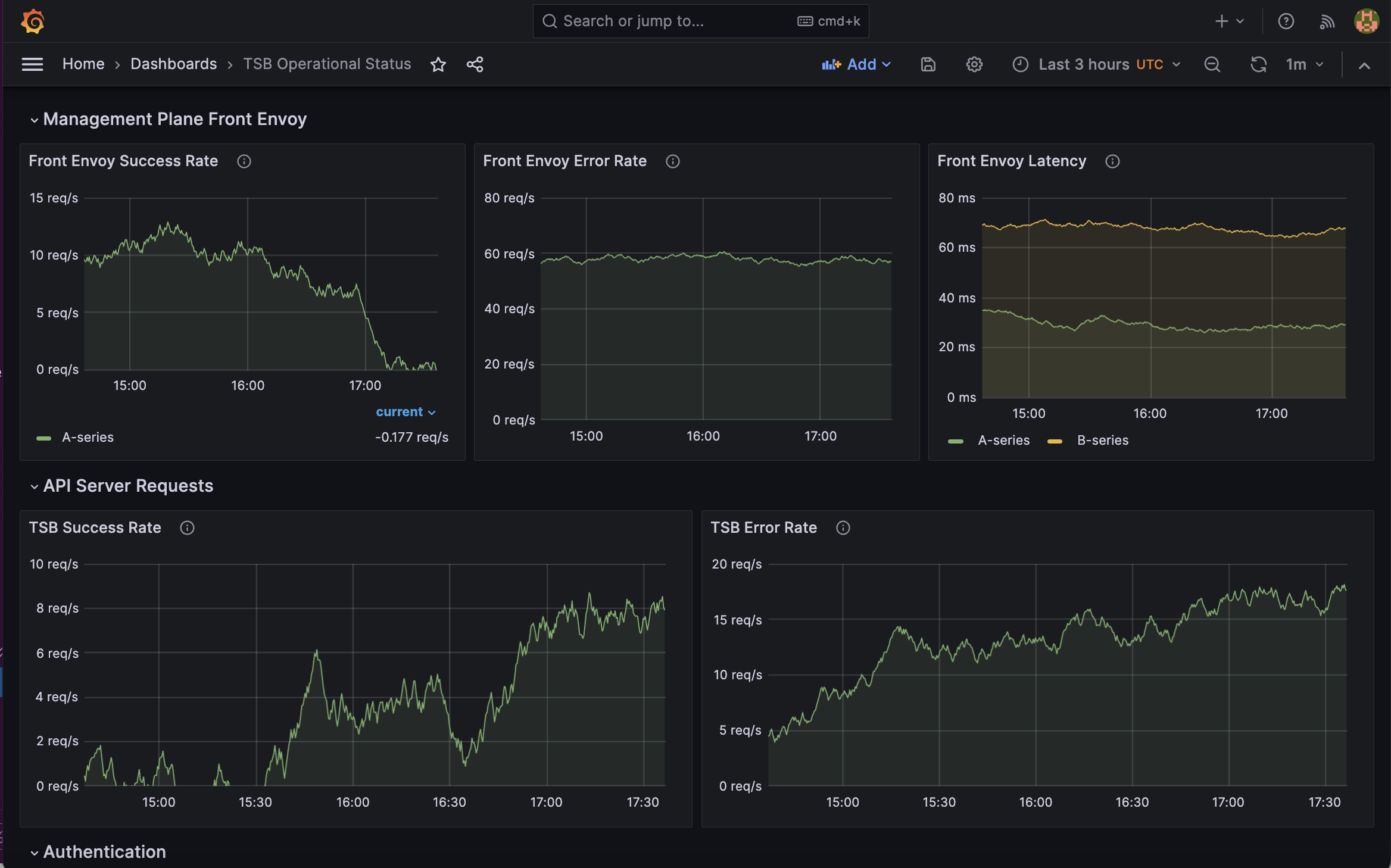

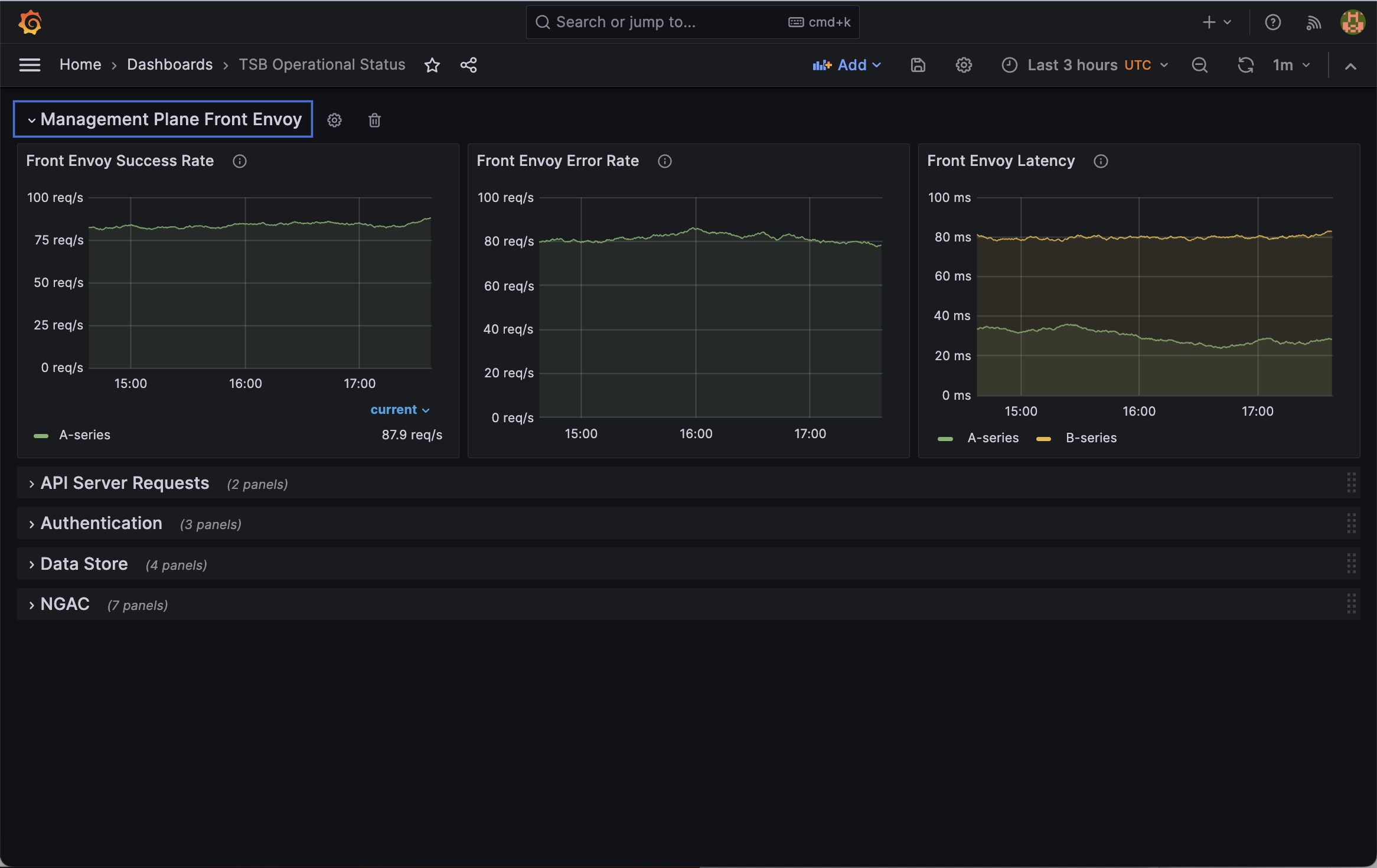

Front Envoy Operational Status

Dedicated operational dashboard for the Front Envoy proxy in the TSB management plane. Surfaces traffic, latency, flow control, and connection metrics to reduce MTTR during incidents.

Success Rate by Upstream Cluster

Rate of successful requests through Front Envoy, broken down by upstream cluster. Covers all inbound gRPC and REST traffic entering the TSB management plane.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

envoy_cluster_internal_upstream_rq_total | component envoy_response_code | sum(rate(envoy_cluster_internal_upstream_rq_total{envoy_response_code=~"2.|3.|401", component="front-envoy"}[1m])) by (envoy_cluster_name) |

Error Rate by Upstream Cluster

Rate of failed requests through Front Envoy (non-2xx, non-3xx, non-401 response codes), broken down by upstream cluster and response code.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

envoy_cluster_internal_upstream_rq_total | component envoy_response_code | sum(rate(envoy_cluster_internal_upstream_rq_total{envoy_response_code!~"2.|3.|401", component="front-envoy"}[1m])) by (envoy_cluster_name, envoy_response_code) |

Request Latency by Upstream Cluster

Front Envoy upstream request latency percentiles (P99, P95, P50), broken down by upstream cluster. Latency spikes here indicate downstream or upstream congestion.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

envoy_cluster_internal_upstream_rq_time_bucket | component | histogram_quantile(0.99, sum(rate(envoy_cluster_internal_upstream_rq_time_bucket{component="front-envoy"}[1m])) by (le, envoy_cluster_name)) |

envoy_cluster_internal_upstream_rq_time_bucket | component | histogram_quantile(0.95, sum(rate(envoy_cluster_internal_upstream_rq_time_bucket{component="front-envoy"}[1m])) by (le, envoy_cluster_name)) |

envoy_cluster_internal_upstream_rq_time_bucket | component | histogram_quantile(0.50, sum(rate(envoy_cluster_internal_upstream_rq_time_bucket{component="front-envoy"}[1m])) by (le, envoy_cluster_name)) |

Number of Downstream flow-control paused events that have NOT yet resumed reading

Shows the number of downstream connections(from edge, central, mpc) or streams for which Envoy has paused reading due to flow-control/backpressure and has not yet resumed reading. Greater than 0 for longer periods means congestion is happening.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

envoy_http_downstream_flow_control_paused_reading_total | component envoy_http_conn_manager_prefix | sum(envoy_http_downstream_flow_control_paused_reading_total{component="front-envoy", envoy_http_conn_manager_prefix="tsb"}-envoy_http_downstream_flow_control_resumed_reading_total{component="front-envoy", envoy_http_conn_manager_prefix="tsb"}) by (envoy_http_conn_manager_prefix) |

envoy_http_downstream_flow_control_resumed_reading_total | component envoy_http_conn_manager_prefix | sum(envoy_http_downstream_flow_control_paused_reading_total{component="front-envoy", envoy_http_conn_manager_prefix="tsb"}-envoy_http_downstream_flow_control_resumed_reading_total{component="front-envoy", envoy_http_conn_manager_prefix="tsb"}) by (envoy_http_conn_manager_prefix) |

Number of upstream flow-control paused events that have NOT yet resumed reading

Shows the number of upstream connections or streams for which Envoy has paused reading due to flow-control/backpressure and has not yet resumed reading. Greater than 0 for longer periods means congestion is happening.

Label meanings —

tsb: HTTP requests to TSB Bridge pod from tctl clients

tsb-grpc: gRPC requests to TSB Bridge pod from MPC

xcp-config-status-tls: xcp-config-status requests to XCP Central from XCP Edge or MPC

xcp-http-api-tls: HTTP requests to XCP Central diagnostics API;

xcp-tls: all XCP requests to XCP Central from edges or MPC.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

envoy_cluster_upstream_flow_control_paused_reading_total | component envoy_cluster_name | sum( envoy_cluster_upstream_flow_control_paused_reading_total{ component="front-envoy", envoy_cluster_name= |

envoy_cluster_upstream_flow_control_resumed_reading_total | component envoy_cluster_name | sum( envoy_cluster_upstream_flow_control_paused_reading_total{ component="front-envoy", envoy_cluster_name= |

Number of upstream flow-control backup events that have NOT yet drained

Represents how many upstream connections or streams are currently in a state where Envoy has buffered more data than it can immediately send because the upstream side is consuming data too slowly. Greater than 0 for longer periods means congestion is happening.

Label meanings —

tsb: HTTP requests to TSB Bridge pod from tctl clients

tsb-grpc: gRPC requests to TSB Bridge pod from MPC

xcp-config-status-tls: xcp-config-status requests to XCP Central from XCP Edge or MPC

xcp-http-api-tls: HTTP requests to XCP Central diagnostics API;

xcp-tls: all XCP requests to XCP Central from edges or MPC.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

envoy_cluster_upstream_flow_control_backed_up_total | component envoy_cluster_name | sum( envoy_cluster_upstream_flow_control_backed_up_total{ component="front-envoy", envoy_cluster_name= |

envoy_cluster_upstream_flow_control_drained_total | component envoy_cluster_name | sum( envoy_cluster_upstream_flow_control_backed_up_total{ component="front-envoy", envoy_cluster_name= |

Upstream Request Timeouts and Pending Overflows

Upstream request timeout rate. Elevated timeouts while upstream flow control is also active points to clients waiting for responses that are queued behind flow-controlled upstream connections.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

envoy_cluster_upstream_rq_pending_overflow_total | component | sum(rate(envoy_cluster_upstream_rq_pending_overflow_total{component="front-envoy"}[1m])) by (envoy_cluster_name) |

envoy_cluster_upstream_rq_timeout_total | component | sum(rate(envoy_cluster_upstream_rq_timeout_total{component="front-envoy"}[1m])) by (envoy_cluster_name) |

HTTP/2 Pending Send Bytes by Upstream Cluster

Bytes queued in HTTP/2 send buffers per upstream cluster. A large and growing value means the upstream is not draining data fast enough — a key indicator of flow control pressure.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

envoy_cluster_http2_pending_send_bytes | component | sum(envoy_cluster_http2_pending_send_bytes{component="front-envoy"}) by (envoy_cluster_name) |

Active Downstream Connections

Number of active (open) downstream connections (From Central, Edges, MPC and other components)currently held by Front Envoy listeners. A steady increase without a corresponding traffic increase may indicate connection leaks or slow clients.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

envoy_listener_downstream_cx_active | component | sum(envoy_listener_downstream_cx_active{component="front-envoy"}) by (job) |

Downstream Connection Rate

Rate of new downstream connections accepted by Front Envoy per second.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

envoy_listener_downstream_cx_total | component | sum(rate(envoy_listener_downstream_cx_total{component="front-envoy"}[1m])) by (job) |

Active Upstream Connections by Cluster

Number of active upstream connections from Front Envoy to each backend cluster. Correlate with flow control metrics: high pending send bytes with few upstream connections may indicate a connection pool saturation.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

envoy_cluster_upstream_cx_active | component | sum(envoy_cluster_upstream_cx_active{component="front-envoy"}) by (envoy_cluster_name) |

Healthy Endpoints per Cluster

Number of healthy endpoints in each upstream cluster as seen by Front Envoy. A drop to 0 means Front Envoy has no healthy host to route to and will return 503. Correlate with upstream error spikes.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

envoy_cluster_membership_healthy | component | envoy_cluster_membership_healthy{component="front-envoy"} |

Upstream Connection Failures and Timeouts

Rate of upstream connection establishment failures (connect_fail) and timeouts (connect_timeout) per cluster. Non-zero values indicate the upstream is unreachable or overloaded — Front Envoy cannot open new connections to serve requests.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

envoy_cluster_upstream_cx_connect_fail_total | component | sum(rate(envoy_cluster_upstream_cx_connect_fail_total{component="front-envoy"}[1m])) by (envoy_cluster_name) |

envoy_cluster_upstream_cx_connect_timeout_total | component | sum(rate(envoy_cluster_upstream_cx_connect_timeout_total{component="front-envoy"}[1m])) by (envoy_cluster_name) |

Upstream Connections Destroyed with Active Requests

Rate of upstream connections destroyed while carrying active requests. Each event means in-flight requests were abruptly terminated — callers receive 503 or a stream reset. Sustained non-zero values indicate upstream instability.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

envoy_cluster_upstream_cx_destroy_with_active_rq_total | component | sum(rate(envoy_cluster_upstream_cx_destroy_with_active_rq_total{component="front-envoy"}[1m])) by (envoy_cluster_name) |

GitOps Operational Status

Operational metrics to indicate Cluster GitOps health

GitOps Status

Shows the status of the GitOps component for each cluster.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

gitops_enabled | N/A | gitops_enabled |

Accepted Admission Requests

Accepted admission requests for each cluster. This is the rate at which operations are processed by the GitOps relay and sent to TSB.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

gitops_admission_count_total | allowed | sum(rate(gitops_admission_count_total{allowed="true"}[1h])) by (cluster_name, component) |

Rejected Admission Requests

Rejected admission requests for each cluster. This is the rate at which operations are processed by the GitOps relay and sent to TSB.

A spike in these metrics may indicate an increase in invalid TSB resources being applied to the Kubernetes clusters, or error in the admission webhook processing.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

gitops_admission_count_total | allowed | sum(rate(gitops_admission_count_total{allowed="false"}[1h])) by (cluster_name, component) |

Admission Review Latency

Admission review latency percentiles grouped by cluster.

The GitOps admission reviews make decisions by forwarding the objects to the Management Plane. This metric helps understand the time it takes to make such decisions.

A spike here may indicate network issues or connectivity issues between the Control Plane and the Management Plane.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

gitops_admission_duration_bucket | N/A | histogram_quantile(0.99, sum(rate(gitops_admission_duration_bucket[1h])) by (cluster_name, component, le)) |

gitops_admission_duration_bucket | N/A | histogram_quantile(0.95, sum(rate(gitops_admission_duration_bucket[1h])) by (cluster_name, component, le)) |

Resources Pushed to TSB

Number of resources pushed to the Management Plane.

This should be equivalent to the admission requests in most cases, but this will also account for object pushes that are done by the background reconcile processes.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

gitops_push_count_total | success | sum(rate(gitops_push_count_total{success="true"}[1h])) by (cluster_name, component) |

Failed pushes to TSB

Number of resource pushes to the Management Plane that failed.

This should be equivalent to the failed admission requests in most cases, but this will also account for object pushes that are done by the background reconciliation processes.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

gitops_push_count_total | code | sum(rate(gitops_push_count_total{code!="OK"}[1h])) by (cluster_name, component, code) |

Resources Conversions

Number of Kubernetes resources that have been read from the cluster and successfully converted into TSB objects to be pushed to the Management plane.

The values for this metric should be the same as the Pushed Objects. If there is a difference between them, it probably means some issue when converting the Kubernetes objects to TSB objects.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

gitops_convert_count_total | success | sum(rate(gitops_convert_count_total{success="true"}[1h])) by (cluster_name, component) |

Resources conversions errors

Number of Kubernetes resources that have been read from the cluster and failed to be converted into TSB objects.

A spike on this metric indicates that the Kubernetes objects could not be converted to TSB objects and that those resources were not sent to the Management Plane.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

gitops_convert_count_total | success | sum(rate(gitops_convert_count_total{success="false"}[1h])) by (cluster_name, component) |

Global Configuration Distribution

These metrics indicate the overall health of Tetrate Service Bridge and should be considered the starting point for any investigation into issues with Tetrate Service Bridge.

Connected Clusters

This details all clusters connected to and receiving configuration from the management plane.

If this number drops below 1 or a given cluster does not appear in this table it means that the cluster is disconnected. This may happen for a brief period of time during upgrades/re-deploys.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

xcp_central_current_edge_connections | N/A | xcp_central_current_edge_connections |

TSB Error Rate (Humans)

Rate of failed requests to the TSB apiserver from the UI and CLI.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

grpc_server_handled_total | component grpc_code grpc_method grpc_type | sum(rate(grpc_server_handled_total{component="tsb", grpc_code!="OK", grpc_type="unary", grpc_method!="SendAuditLog"}[1m])) by (grpc_code) OR on() vector(0) |

Istio-Envoy Sync Time (P99)

Once XCP has synced with the management plane it creates resources for Istio to configure Envoy. Istio usually distributes these within a second.

If this number starts to exceed 10 seconds then you may need to scale out istiod. In small clusters, it is possible this number is too small to be handled by the histogram buckets so may be nil.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_proxy_convergence_time_bucket | N/A | histogram_quantile(99/100, sum(rate(pilot_proxy_convergence_time_bucket[1m])) by (le, cluster_name)) |

XCP central -> edge Sync Time (P99)

MPC component translates TSB configuration into XCP objects. XCP central then sends these objects to every Edge connected to it.

This is the time taken for XCP central to send the configs to edges in ms.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

xcp_central_config_propagation_time_ms_bucket | N/A | histogram_quantile(99/100, sum(rate(xcp_central_config_propagation_time_ms_bucket[1m])) by (le, edge)) |

Istiod Errors

Rate of istiod errors broken down by cluster. This graph helps identify clusters that may be experiencing problems. Typically, there should be no errors. Any non-transient errors should be investigated.

Sometimes this graph will show "No data" or these metrics won't exist. This is because istiod only emits these metrics if the errors occur.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_total_xds_internal_errors | N/A | sum(rate(pilot_xds_write_timeout[1m])) by (cluster_name) + sum(rate(pilot_total_xds_internal_errors[1m])) by (cluster_name) + sum(rate(pilot_total_xds_rejects[1m])) by (cluster_name) + sum(rate(pilot_xds_expired_nonce[1m])) by (cluster_name) + sum(rate(pilot_xds_push_context_errors[1m])) by (cluster_name) + sum(rate(pilot_xds_pushes{type=~".*_senderr"}[1m])) by (cluster_name) OR on() vector(0) |

pilot_total_xds_rejects | N/A | sum(rate(pilot_xds_write_timeout[1m])) by (cluster_name) + sum(rate(pilot_total_xds_internal_errors[1m])) by (cluster_name) + sum(rate(pilot_total_xds_rejects[1m])) by (cluster_name) + sum(rate(pilot_xds_expired_nonce[1m])) by (cluster_name) + sum(rate(pilot_xds_push_context_errors[1m])) by (cluster_name) + sum(rate(pilot_xds_pushes{type=~".*_senderr"}[1m])) by (cluster_name) OR on() vector(0) |

pilot_xds_expired_nonce | N/A | sum(rate(pilot_xds_write_timeout[1m])) by (cluster_name) + sum(rate(pilot_total_xds_internal_errors[1m])) by (cluster_name) + sum(rate(pilot_total_xds_rejects[1m])) by (cluster_name) + sum(rate(pilot_xds_expired_nonce[1m])) by (cluster_name) + sum(rate(pilot_xds_push_context_errors[1m])) by (cluster_name) + sum(rate(pilot_xds_pushes{type=~".*_senderr"}[1m])) by (cluster_name) OR on() vector(0) |

pilot_xds_push_context_errors | N/A | sum(rate(pilot_xds_write_timeout[1m])) by (cluster_name) + sum(rate(pilot_total_xds_internal_errors[1m])) by (cluster_name) + sum(rate(pilot_total_xds_rejects[1m])) by (cluster_name) + sum(rate(pilot_xds_expired_nonce[1m])) by (cluster_name) + sum(rate(pilot_xds_push_context_errors[1m])) by (cluster_name) + sum(rate(pilot_xds_pushes{type=~".*_senderr"}[1m])) by (cluster_name) OR on() vector(0) |

pilot_xds_pushes | type | sum(rate(pilot_xds_write_timeout[1m])) by (cluster_name) + sum(rate(pilot_total_xds_internal_errors[1m])) by (cluster_name) + sum(rate(pilot_total_xds_rejects[1m])) by (cluster_name) + sum(rate(pilot_xds_expired_nonce[1m])) by (cluster_name) + sum(rate(pilot_xds_push_context_errors[1m])) by (cluster_name) + sum(rate(pilot_xds_pushes{type=~".*_senderr"}[1m])) by (cluster_name) OR on() vector(0) |

pilot_xds_write_timeout | N/A | sum(rate(pilot_xds_write_timeout[1m])) by (cluster_name) + sum(rate(pilot_total_xds_internal_errors[1m])) by (cluster_name) + sum(rate(pilot_total_xds_rejects[1m])) by (cluster_name) + sum(rate(pilot_xds_expired_nonce[1m])) by (cluster_name) + sum(rate(pilot_xds_push_context_errors[1m])) by (cluster_name) + sum(rate(pilot_xds_pushes{type=~".*_senderr"}[1m])) by (cluster_name) OR on() vector(0) |

1. Time for config to be visible by XCP Central (P99)

Time it takes for the configuration to be seen by XCP Central in the Management Plane.

This includes:

- The time it takes for MPC to pull configs from TSB

- Time it takes to process the configurations.

- Time it takes to apply the delta to the "tsb" namespace so that configurations are visible by XCP Central.

After this time, XCP Central sees the configurations and distributes them to the worklaod clusters.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

mpc_config_total_propagation_duration_bucket | N/A | histogram_quantile(99/100, sum(rate(mpc_config_total_propagation_duration_bucket[1m])) by (le)) |

2. Time it takes to send the configs from MP to the workload clusters (P99)

Once XCP Central sees the configurations in the "tsb" namespace, it sends the configs to the workload clusters.

This panel shows the time it takes (P99) to send the configs to each cluster.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

xcp_central_config_propagation_time_ms_bucket | N/A | histogram_quantile(99/100, sum(rate(xcp_central_config_propagation_time_ms_bucket[1m])) by (le, edge)) |

3. Istio Config generation time (P99)

The time it takes for the TSB agents in the workload cluster to create the Istio configurations for all the namespaces.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

xcp_edge_total_translation_time_in_ms_bucket | N/A | histogram_quantile(99/100,sum(rate(xcp_edge_total_translation_time_in_ms_bucket[1m])) by (le, cluster_name)) |

4. Proxy convergence time (P99)

Time it takes for Istio to distribute the configuration to the Envoy proxies.

If this number starts to exceed 10 seconds then you may need to scale out istiod. In small clusters, it is possible this number is too small to be handled by the histogram buckets so may be nil.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_proxy_convergence_time_bucket | N/A | histogram_quantile(99/100, sum(rate(pilot_proxy_convergence_time_bucket[1m])) by (le, cluster_name)) |

Istiod / Pilot Control Plane (MP)

Connected Proxies

pilot_xds — total endpoints connected via xDS

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_xds | cluster_name istio_revision version | sum(pilot_xds{cluster_name= |

Total Error Rate

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_sds_certificate_errors_total | cluster_name istio_revision | sum(rate(pilot_xds_rejects_total{cluster_name= |

pilot_xds_expired_nonce_total | cluster_name istio_revision | sum(rate(pilot_xds_rejects_total{cluster_name= |

pilot_xds_rejects_total | cluster_name istio_revision | sum(rate(pilot_xds_rejects_total{cluster_name= |

Median Proxy Convergence Time

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_proxy_convergence_time_bucket | cluster_name istio_revision | histogram_quantile(0.5, sum(rate(pilot_proxy_convergence_time_bucket{cluster_name= |

Services Known

pilot_services — total services in the mesh

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_services | cluster_name istio_revision | max(pilot_services{cluster_name= |

VirtualServices

pilot_virt_services — total VS known to pilot

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_virt_services | cluster_name istio_revision | max(pilot_virt_services{cluster_name= |

Build & Version Info

istio_build + pilot_info + pilot_xds by version

| Metric Name | Labels | PromQL Expression |

|---|---|---|

istio_build | cluster_name istio_revision | istio_build{cluster_name= |

Istiod Pods

Istiod pod instances connected for the selected revision

| Metric Name | Labels | PromQL Expression |

|---|---|---|

istiod_uptime_seconds | cluster_name istio_revision | istiod_uptime_seconds{cluster_name= |

Connected Proxies by Instance

pilot_xds by proxy version over time. Useful during upgrades to see old vs new proxy versions.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_xds | cluster_name istio_revision version | sum by (instance)(pilot_xds{cluster_name= |

Istiod CPU Usage (cores)

CPU cores consumed by each istiod process (rate of process_cpu_seconds_total)

| Metric Name | Labels | PromQL Expression |

|---|---|---|

process_cpu_seconds_total | cluster_name component istio_revision | rate(process_cpu_seconds_total{cluster_name= |

Istiod Memory Usage

Resident memory (RSS) of each istiod process

| Metric Name | Labels | PromQL Expression |

|---|---|---|

go_memstats_heap_inuse_bytes | cluster_name component istio_revision | go_memstats_heap_inuse_bytes{cluster_name= |

process_resident_memory_bytes | cluster_name component istio_revision | process_resident_memory_bytes{cluster_name= |

Root Cert Expires In

citadel_server_root_cert_expiry_seconds — time remaining before root cert expires. NEGATIVE = EXPIRED!

| Metric Name | Labels | PromQL Expression |

|---|---|---|

citadel_server_root_cert_expiry_seconds | cluster_name istio_revision | min(citadel_server_root_cert_expiry_seconds{cluster_name= |

CSR Rate & Cert Issuance Rate

citadel_server_csr_count — CSRs received. citadel_server_success_cert_issuance_count — successful issuances. Gap = failures.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

citadel_server_csr_count_total | cluster_name istio_revision | sum(rate(citadel_server_csr_count_total{cluster_name= |

citadel_server_success_cert_issuance_count_total | cluster_name istio_revision | sum(rate(citadel_server_success_cert_issuance_count_total{cluster_name= |

SDS Certificate Errors

pilot_sds_certificate_errors_total — failures fetching SDS key/cert. Non-zero = mTLS issues.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_sds_certificate_errors_total | cluster_name istio_revision | sum(rate(pilot_sds_certificate_errors_total{cluster_name= |

pilot_sds_certificate_errors_total | cluster_name istio_revision | sum(pilot_sds_certificate_errors_total{cluster_name= |

Sidecar Injection Rate

sidecar_injection_requests_total vs sidecar_injection_success_total — gap = failed injections

| Metric Name | Labels | PromQL Expression |

|---|---|---|

sidecar_injection_requests_total | cluster_name istio_revision | sum(rate(sidecar_injection_requests_total{cluster_name= |

sidecar_injection_success_total | cluster_name istio_revision | sum(rate(sidecar_injection_success_total{cluster_name= |

Injection Latency (p50 / p95 / p99)

sidecar_injection_time_seconds — time taken for sidecar injection. High = slow pod starts.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

sidecar_injection_time_seconds_bucket | cluster_name istio_revision | histogram_quantile(0.50, sum by (le) (rate(sidecar_injection_time_seconds_bucket{cluster_name= |

sidecar_injection_time_seconds_bucket | cluster_name istio_revision | histogram_quantile(0.95, sum by (le) (rate(sidecar_injection_time_seconds_bucket{cluster_name= |

sidecar_injection_time_seconds_bucket | cluster_name istio_revision | histogram_quantile(0.99, sum by (le) (rate(sidecar_injection_time_seconds_bucket{cluster_name= |

Validation & Webhook

galley_validation_passed — validated resources. webhook_patch_failures_total — webhook patch failures by reason.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

galley_validation_passed | cluster_name istio_revision | sum by (group, resource) (rate(galley_validation_passed{cluster_name= |

webhook_patch_failures_total | cluster_name istio_revision | sum by (name, reason) (rate(webhook_patch_failures_total{cluster_name= |

Endpoint Health

All the endpoint-related health gauges. Non-zero values on any of these = investigate.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

endpoint_no_pod | cluster_name istio_revision | sum(endpoint_no_pod{cluster_name= |

pilot_eds_no_instances | cluster_name istio_revision | sum(pilot_eds_no_instances{cluster_name= |

pilot_endpoint_not_ready | cluster_name istio_revision | sum(pilot_endpoint_not_ready{cluster_name= |

pilot_k8s_endpoints_pending_pod | cluster_name istio_revision | sum(pilot_k8s_endpoints_pending_pod{cluster_name= |

pilot_no_ip | cluster_name istio_revision | sum(pilot_no_ip{cluster_name= |

Config Conflicts

All conflict gauges. Non-zero = config issues that may cause unexpected routing. pilot_conflict_inbound_listener, pilot_conflict_outbound_listener_tcp_over_current_tcp, pilot_destrule_subsets, pilot_duplicate_envoy_clusters, pilot_dns_cluster_without_endpoints, pilot_vservice_dup_domain.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_conflict_inbound_listener | cluster_name istio_revision | sum(pilot_conflict_inbound_listener{cluster_name= |

pilot_conflict_outbound_listener_tcp_over_current_tcp | cluster_name istio_revision | sum(pilot_conflict_outbound_listener_tcp_over_current_tcp{cluster_name= |

pilot_destrule_subsets | cluster_name istio_revision | sum(pilot_destrule_subsets{cluster_name= |

pilot_dns_cluster_without_endpoints | cluster_name istio_revision | sum(pilot_dns_cluster_without_endpoints{cluster_name= |

pilot_duplicate_envoy_clusters | cluster_name istio_revision | sum(pilot_duplicate_envoy_clusters{cluster_name= |

pilot_vservice_dup_domain | cluster_name istio_revision | sum(pilot_vservice_dup_domain{cluster_name= |

Applied Envoy Filters

Number of successfully applied Envoy Filters in the cluster.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_envoy_filter_status | cluster_name istio_revision result | sum(pilot_envoy_filter_status{cluster_name= |

Errored Envoy Filters

Number of Envoy Filters in the cluster that failed to apply.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_envoy_filter_status | cluster_name istio_revision result | sum(pilot_envoy_filter_status{cluster_name= |

Envoy Filter status

Status of each Envoy Filter in the cluster (applied/error).

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_envoy_filter_status | cluster_name istio_revision | pilot_envoy_filter_status{cluster_name= |

Config Events (pilot_k8s_cfg_events)

Istio config resource events: add/update/delete by type (VirtualService, DestinationRule, Gateway, EnvoyFilter, ServiceEntry, etc). Spikes = someone is pushing config.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_k8s_cfg_events_total | cluster_name istio_revision | sum by (type, event) (rate(pilot_k8s_cfg_events_total{cluster_name= |

Registry Events (pilot_k8s_reg_events)

K8s registry events: Pods, Services, EndpointSlice, Nodes, Namespaces. Spikes = deployments, scaling, node changes.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_k8s_reg_events_total | cluster_name istio_revision | sum by (type, event) (rate(pilot_k8s_reg_events_total{cluster_name= |

Inbound Updates (pilot_inbound_updates)

Updates received by pilot: config, eds, svc, svcdelete. This is the aggregate trigger input before debouncing.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_inbound_updates_total | cluster_name istio_revision | sum by (type) (rate(pilot_inbound_updates_total{cluster_name= |

Push Triggers by Reason

pilot_push_triggers — what is causing pushes: config, endpoint, service, proxy, secret, networks. 'config' = Istio CR changes. 'endpoint' = pod scale events. 'secret' = cert rotations.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_push_triggers_total | cluster_name istio_revision | sum by (type) (rate(pilot_push_triggers_total{cluster_name= |

Debounce Time (p50 / p95 / p99)

pilot_debounce_time — delay between first config event and the merged push entering the queue. High = many events being batched (normal under churn). Very high = too much config churn.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_debounce_time_bucket | cluster_name istio_revision | histogram_quantile(0.50, sum by (le) (rate(pilot_debounce_time_bucket{cluster_name= |

pilot_debounce_time_bucket | cluster_name istio_revision | histogram_quantile(0.95, sum by (le) (rate(pilot_debounce_time_bucket{cluster_name= |

pilot_debounce_time_bucket | cluster_name istio_revision | histogram_quantile(0.99, sum by (le) (rate(pilot_debounce_time_bucket{cluster_name= |

PushContext Init Time (p50 / p95 / p99)

pilot_pushcontext_init_seconds — time to build the internal push context (service index, config snapshot). High = large mesh with many services/configs.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_pushcontext_init_seconds_bucket | cluster_name istio_revision | histogram_quantile(0.50, sum by (le) (rate(pilot_pushcontext_init_seconds_bucket{cluster_name= |

pilot_pushcontext_init_seconds_bucket | cluster_name istio_revision | histogram_quantile(0.95, sum by (le) (rate(pilot_pushcontext_init_seconds_bucket{cluster_name= |

pilot_pushcontext_init_seconds_bucket | cluster_name istio_revision | histogram_quantile(0.99, sum by (le) (rate(pilot_pushcontext_init_seconds_bucket{cluster_name= |

Proxy Queue Time (p50 / p95 / p99)

pilot_proxy_queue_time — time a proxy sits in the push queue before being processed. High = istiod overloaded, can't keep up with push rate.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_proxy_queue_time_bucket | cluster_name istio_revision | histogram_quantile(0.50, sum by (le) (rate(pilot_proxy_queue_time_bucket{cluster_name= |

pilot_proxy_queue_time_bucket | cluster_name istio_revision | histogram_quantile(0.95, sum by (le) (rate(pilot_proxy_queue_time_bucket{cluster_name= |

pilot_proxy_queue_time_bucket | cluster_name istio_revision | histogram_quantile(0.99, sum by (le) (rate(pilot_proxy_queue_time_bucket{cluster_name= |

xDS Push Time by Type (p99)

pilot_xds_push_time — time to generate xDS config per type (CDS, LDS, RDS, EDS, SDS, ECDS, NDS). CDS/RDS usually slowest in large meshes.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_xds_push_time_bucket | cluster_name istio_revision | histogram_quantile(0.99, sum by (type, le) (rate(pilot_xds_push_time_bucket{cluster_name= |

xDS Send Time (p50 / p95 / p99)

pilot_xds_send_time — time to serialize and send generated config to proxy over gRPC. High = large configs or network issues.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_xds_send_time_bucket | cluster_name istio_revision | histogram_quantile(0.50, sum by (le) (rate(pilot_xds_send_time_bucket{cluster_name= |

pilot_xds_send_time_bucket | cluster_name istio_revision | histogram_quantile(0.95, sum by (le) (rate(pilot_xds_send_time_bucket{cluster_name= |

pilot_xds_send_time_bucket | cluster_name istio_revision | histogram_quantile(0.99, sum by (le) (rate(pilot_xds_send_time_bucket{cluster_name= |

End-to-End Proxy Convergence Time (p50 / p95 / p99)

pilot_proxy_convergence_time — THE key metric. Total delay from config change to proxy having the config. This is the sum of all pipeline stages. Target: < 1s for most meshes, < 5s for large.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_proxy_convergence_time_bucket | cluster_name istio_revision | histogram_quantile(0.50, sum by (le) (rate(pilot_proxy_convergence_time_bucket{cluster_name= |

pilot_proxy_convergence_time_bucket | cluster_name istio_revision | histogram_quantile(0.95, sum by (le) (rate(pilot_proxy_convergence_time_bucket{cluster_name= |

pilot_proxy_convergence_time_bucket | cluster_name istio_revision | histogram_quantile(0.99, sum by (le) (rate(pilot_proxy_convergence_time_bucket{cluster_name= |

xDS Pushes by Type

pilot_xds_pushes — push count per xDS type (CDS, LDS, RDS, EDS, SDS, ECDS, NDS). Rate shows how active the control plane is.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_xds_pushes_total | cluster_name istio_revision | sum by (type) (rate(pilot_xds_pushes_total{cluster_name= |

Config Size Pushed by Type (avg bytes)

pilot_xds_config_size_bytes — average size of config pushed per xDS type. CDS/RDS are typically the largest. Large config = slow pushes, high proxy memory.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_xds_config_size_bytes_count | cluster_name istio_revision | sum by (type) (rate(pilot_xds_config_size_bytes_sum{cluster_name= |

pilot_xds_config_size_bytes_sum | cluster_name istio_revision | sum by (type) (rate(pilot_xds_config_size_bytes_sum{cluster_name= |

Expired Nonces by Type

pilot_xds_expired_nonce — proxy sent a request with an outdated nonce (it was too slow to ACK before the next push). High = proxy can't keep up with config churn.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_xds_expired_nonce_total | cluster_name istio_revision | sum by (type) (rate(pilot_xds_expired_nonce_total{cluster_name= |

Config Size by Type (p99 bytes)

pilot_xds_config_size_bytes as p99 histogram — worst-case config size per push type.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_xds_config_size_bytes_bucket | cluster_name istio_revision | histogram_quantile(0.99, sum by (type, le) (rate(pilot_xds_config_size_bytes_bucket{cluster_name= |

Largest Request Received

pilot_xds_recv_max — max size of an xDS request received from any proxy. Very large = proxy sending huge ACKs or NACKs.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_xds_recv_max | cluster_name istio_revision | max(pilot_xds_recv_max{cluster_name= |

Total Config Bytes Pushed / sec

Total data rate of config being pushed across all types. High = istiod spending significant bandwidth on xDS.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

pilot_xds_config_size_bytes_sum | cluster_name istio_revision | sum by (type) (rate(pilot_xds_config_size_bytes_sum{cluster_name= |

MPC Operational Status

Operational metrics to indicate Management Plane Controller (MPC) health.

Propagate Config Objects duration

How long does it take to propagate all the configs from TSB to XCP. Time since MPC retrieves the configs until they are applied in the k8s namespace. It's composed of:

- Reception time: how long it takes for the

GetAllConfigObjectsgRPC method to retrieve all configuration objects. - Conversion time: how long it takes to compute the model cache, execute the conversions and apply them to the k8s namespace.

These metrics are distributed into buckets that provide seconds of accuracy. The square steps displayed on the dashboard represent fluctuations within the next bucket.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

mpc_config_total_process_duration_bucket | component error | histogram_quantile(0.99, sum(rate(mpc_config_total_process_duration_bucket{error="", component="mpc"}[5m])) by (le,component)) |

mpc_config_total_process_duration_bucket | component error | histogram_quantile(0.99, sum(rate(mpc_config_total_process_duration_bucket{error="true", component="mpc"}[5m])) by (le,component)) |

mpc_config_total_propagation_duration_bucket | component | histogram_quantile(0.99, sum(rate(mpc_config_total_propagation_duration_bucket{component="mpc"}[5m])) by (le,component)) |

mpc_get_all_config_objects_duration_bucket | component error | histogram_quantile(0.99, sum(rate(mpc_get_all_config_objects_duration_bucket{error="", component="mpc"}[5m])) by (le,component)) |

mpc_get_all_config_objects_duration_bucket | component error | histogram_quantile(0.99, sum(rate(mpc_get_all_config_objects_duration_bucket{error="true", component="mpc"}[5m])) by (le,component)) |

Received configs

The number of resources that sent from TSB to MPC.

This metric shows the number of objects that are created, updated, and deleted as part of a configuration push from MPC to XCP.

This metric can be used together with the XCP push operations and push duration to get an understanding of how the amount of resources being pushed to XCP affects the time it takes for the entire configuration push operation to complete.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

mpc_tsb_config_received_count | component resource | max(max_over_time(mpc_tsb_config_received_count{resource="", component="mpc"}[5m])) by(component) |

Config Processing duration

Time it takes to process an entire config set. It shows the details about the amount of time spent pre-processing the configurations, converting them to XCP, and pushing them to the k8s cluster.

These metrics are distributed in buckets to give seconds accuracy, square steps in the dashboard represent a fluctuation between near buckets.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

mpc_config_conversion_duration_bucket | component error | histogram_quantile(0.99, sum(rate(mpc_config_conversion_duration_bucket{error="", component="mpc"}[5m])) by (le,component)) |

mpc_config_pre_process_duration_bucket | component error | histogram_quantile(0.99, sum(rate(mpc_config_pre_process_duration_bucket{error="", component="mpc"}[5m])) by (le,component)) |

mpc_config_total_process_duration_bucket | component error | histogram_quantile(0.99, sum(rate(mpc_config_total_process_duration_bucket{error="", component="mpc"}[5m])) by (le,component)) |

mpc_xcp_config_push_duration_bucket | component error | histogram_quantile(0.99, sum(rate(mpc_xcp_config_push_duration_bucket{error="", component="mpc"}[5m])) by (le,component)) |

Received configs by type

Configuration updates received from TSB are processed by MPC and translated into XCP resources. This metric shows the number of objects of each type MPC will convert.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

mpc_tsb_config_received_count | component resource | sum(max_over_time(mpc_tsb_config_received_count{resource!="", component="mpc"}[5m])) by(component, resource) |

Total Conversion Time by Type every 5m

Time it takes to convert TSB resources to the XCP APIs.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

mpc_xcp_conversion_duration_sum | component | sum(rate(mpc_xcp_conversion_duration_sum{component="mpc"}[5m])) by (resource) |

Conversion Time by Type every 5m

Time it takes to convert TSB resources to the XCP APIs.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

mpc_xcp_conversion_duration_bucket | N/A | histogram_quantile(0.99, sum(rate(mpc_xcp_conversion_duration_bucket[5m])) by (le, resource)) |

Conversions by Resource every 5m

Conversions by resource executed in a time period. This can be used to understand the throughput of the MPC conversions.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

mpc_xcp_conversion_duration_count | component resource | sum(increase(mpc_xcp_conversion_duration_count{resource!="", component="mpc"}[5m])) by (resource) |

Conversions Invalidations by Resource every 5m

Conversions Invalidations by resource executed in a time period. This can be used to understand the throughput of the MPC conversions.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

mpc_xcp_conversion_invalidation_duration_count | component resource | sum(increase(mpc_xcp_conversion_invalidation_duration_count{component="mpc", resource!=""}[5m])) by (resource) |

Conversion Invalidation Time every 5m

Time it takes to invalidate TSB resources for a given conversion to the XCP APIs.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

mpc_xcp_conversion_invalidation_duration_bucket | component resource | histogram_quantile(0.99, sum(rate(mpc_xcp_conversion_invalidation_duration_bucket{component="mpc", resource!=""}[5m])) by (le, resource)) |

Updates from TSB every 5m

Configuration and onboarded cluster messages received from TSB.

The number of update messages may increase or decrease based on the time it takes for MPC to fully process the messages. The more time it takes to process, the less frequent config updates will be retrieved.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

grpc_client_handled_total | component grpc_code grpc_method | sum(increase(grpc_client_handled_total{component="mpc", grpc_method="GetAllConfigObjects", grpc_code="OK"}[5m])) or on() vector(0) |

grpc_client_handled_total | component grpc_code grpc_method | sum(increase(grpc_client_handled_total{component="mpc", grpc_method="GetAllClusters", grpc_code="OK"}[5m])) or on() vector(0) |

grpc_client_handled_total | component grpc_code grpc_method | sum(increase(grpc_client_handled_total{component="mpc", grpc_method="GetAllConfigObjects", grpc_code!="OK"}[5m])) or on() vector(0) |

grpc_client_handled_total | component grpc_code grpc_method | sum(increase(grpc_client_handled_total{component="mpc", grpc_method="GetAllClusters", grpc_code!="OK"}[5m])) or on() vector(0) |

MPC to XCP pushed configs

The number of resources that are pushed to XCP.

This metric shows the number of objects that are created, updated, and deleted as part of a configuration push from MPC to XCP. It also shows how many fetch calls to the k8s api server are done.

This metric can be used together with the TSB tp MPC sent configs and XCP push operations and push duration to get an understanding of how the amount of resources being pushed to XCP affects the time it takes for the entire configuration push operation to complete.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

mpc_xcp_config_create_ops | component | sum(mpc_xcp_config_create_ops{component="mpc"}) |

mpc_xcp_config_delete_ops | component | sum(mpc_xcp_config_delete_ops{component="mpc"}) |

mpc_xcp_config_fetch_ops | component | sum(mpc_xcp_config_fetch_ops{component="mpc"}) |

mpc_xcp_config_update_ops | component | sum(mpc_xcp_config_update_ops{component="mpc"}) |

MCP to XCP pushed configs error

The number of resources that failed while pushing to XCP.

This metric shows the number of objects that fail when they are tried to be created, updated, and deleted as part of a configuration push from MPC to XCP. It also shows the number of failed fetch calls to the k8s api server.

This metric can be used together with the MPC to TSB push configs and the XCP push operations and push duration to get an understanding of how the amount of resources being pushed to XCP affects the time it takes for the entire configuration push operation to complete.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

mpc_xcp_config_create_ops_err | component | sum(mpc_xcp_config_create_ops_err{component="mpc"}) |

mpc_xcp_config_delete_ops_err | component | sum(mpc_xcp_config_delete_ops_err{component="mpc"}) |

mpc_xcp_config_fetch_ops_err | component | sum(mpc_xcp_config_fetch_ops_err{component="mpc"}) |

mpc_xcp_config_update_ops_err | component | sum(mpc_xcp_config_update_ops_err{component="mpc"}) |

Config Status updates every 5m

Config Status update messages sent over the gRPC streams, from XCP to MPC to XCP.

This metric can help understand how messages are queued in TSB when it is under load. The value for both metrics should always be the same. If the Received by TSB metric has a value lower than the MPC one, it means TSB is under load and cannot process all messages sent by MPC as fast as MPC is sending them.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

grpc_client_msg_received_total | component grpc_method | sum(increase(grpc_client_msg_received_total{grpc_method="Report",component="mpc"}[5m])) or on() vector(0) |

grpc_client_msg_sent_total | component grpc_method | sum(increase(grpc_client_msg_sent_total{grpc_method="PushStatus",component="mpc"}[5m])) or on() vector(0) |

grpc_server_msg_received_total | component grpc_method | sum(increase(grpc_server_msg_received_total{grpc_method="PushStatus", component="tsb"}[5m])) or on() vector(0) |

Config Status updates processed every 5m

This is the number of config status updates that are processed by the Management Plane Controller (MPC), that are received from XCP and to be sent to TSB.

There are two gRPC streams, one that connects XCP to MPC and another one that connects MPC to TSB.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

permanent_stream_operation_total | component error name | sum(increase(permanent_stream_operation_total{name="StatusPush", error="", component="mpc"}[5m])) or on() vector(0) |

permanent_stream_operation_total | component error name | sum(increase(permanent_stream_operation_total{name="StatusPull", error="", component="mpc"}[5m])) or on() vector(0) |

permanent_stream_operation_total | component error name | sum(increase(permanent_stream_operation_total{name="StatusPush", error!="", component="mpc"}[5m])) or on() vector(0) |

permanent_stream_operation_total | component error name | sum(increase(permanent_stream_operation_total{name="StatusPull", error!="", component="mpc"}[5m])) or on() vector(0) |

Config Status stream connection attempts every 5m

The number of connection (and reconnection) attempts on the config status updates streams. MPC sends the config status updates over a permanently connected gRPC stream to TSB. At the same time, XCP sends them to MPC. This metric shows the number of connections and reconnections that happened on each stream.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

permanent_stream_connection_attempts_total | error name | sum(increase(permanent_stream_connection_attempts_total{name="StatusPull", error="" }[5m])) or on() vector(0) |

permanent_stream_connection_attempts_total | error name | sum(increase(permanent_stream_connection_attempts_total{name="StatusPull", error!="" }[5m])) or on() vector(0) |

permanent_stream_connection_attempts_total | error name | sum(increase(permanent_stream_connection_attempts_total{name="StatusPush", error="" }[5m])) or on() vector(0) |

permanent_stream_connection_attempts_total | error name | sum(increase(permanent_stream_connection_attempts_total{name="StatusPush", error!="" }[5m])) or on() vector(0) |

TSB Handled Status Reports 5m

Number of config status reports handled by TSB. Each received config status report is either handled or skipped.

- Handled: Process and store the status report directly, or if --max-status-report-workers is > 1, enqueued for async processing.

- Skipped: For duplicated status reports.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

config_handling_duration_count | component | sum(increase(config_handling_duration_count{component="tsb"}[5m])) by(skip) |

Config status cache operations every 5m

Number of operations done in the config status cache when receiving new config statuses.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

config_status_cache_add_total | error | sum(increase(config_status_cache_add_total{error="false"}[5m])) |

config_status_cache_add_total | error | sum(increase(config_status_cache_add_total{error="true"}[5m])) or on() vector(0) |

config_status_cache_check_total | N/A | sum(increase(config_status_cache_check_total[5m])) |

config_status_cache_check_total | error | sum(increase(config_status_cache_check_total{error="true"}[5m])) or on() vector(0) |

config_status_cache_invalidate_total | N/A | sum(increase(config_status_cache_invalidate_total[5m])) |

grpc_server_msg_received_total | component grpc_method | sum(increase(grpc_server_msg_received_total{grpc_method="PushStatus", component="tsb"}[5m])) |

TSB Processed Status Reports in 5m

The number of config status reports processed by TSB. This number must be the same as the number of handled ones without the skipped ones.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

config_status_report_work_duration_bucket | component | histogram_quantile(0.99, sum(rate(config_status_report_work_duration_bucket{component="tsb"}[5m])) by (le, skip)) |

TSB Handling Status Reports Duration 5m

The P99 duration in milliseconds of handling a received status reports handled by TSB. Each received config status report is either handled or skipped.

- Handled: Process and store the status report directly, or if --max-status-report-workers is > 1, enqueued for async processing.

- Skipped: For duplicated status reports.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

config_handling_duration_bucket | component | histogram_quantile(0.99, sum(rate(config_handling_duration_bucket{component="tsb"}[5m])) by (le, skip)) |

Config status cache operations by event type every 5m

Number of operations done in the cache by event type.

This metric helps understand the amount of event processing that can be skipped on the TSB side when receiving events because TSB already knows about them, and help understand how status event reporting relates to load on the TSB side.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

config_status_cache_add_total | component error | sum(increase(config_status_cache_add_total{error="false", component="tsb"}[5m])) by (type) |

config_status_cache_check_total | component error | sum(increase(config_status_cache_check_total{error="false", component="tsb"}[5m])) by (type) |

TSB Processing Status Reports Duration 5m

The P99 duration in milliseconds of processing config status reports handled by TSB. Processing of a config status report involves analyse, apply/storing the result, propagate to parents and dependants.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

config_status_report_work_duration_bucket | component | histogram_quantile(0.99, sum(rate(config_status_report_work_duration_bucket{component="tsb"}[5m])) by (le, skip)) |

Status Reports Work per Shard Distribution

Only applies when tsb --max-status-report-workers is > 1. Distribution of the status report work across the different shards.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

sharded_queue_work_duration_count | component name | sum(increase(sharded_queue_work_duration_count{name="status-reports", component="tsb"}[5m])) by (component, shard) |

Work executions every 5m

Only applies when tsb --max-status-report-workers is > 1. Amount of status processing jobs processed

| Metric Name | Labels | PromQL Expression |

|---|---|---|

sharded_queue_work_duration_count | component | sum(increase(sharded_queue_work_duration_count{component="tsb"}[5m])) by (name) |

Status updates worker time every 5m

Only applies when tsb --max-status-report-workers is > 1. Time it takes for workers to process a single status update event.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

sharded_queue_work_duration_bucket | N/A | histogram_quantile(0.99, sum(rate(sharded_queue_work_duration_bucket[5m])) by (le, name)) |

TSB Status Updates Enqueue Delay 5m

Only applies when tsb --max-status-report-workers is > 1. The P99 delay is milliseconds since a config status report started the enqueuing until the queue accepted(enqueued) the config status report.

If the P99 delay goes high it means that that a shard of the queue is filled up reaching its max capacity. If it goes over minutes, it means that there is deadlock probably.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

sharded_queue_enqueue_delay_bucket | name | histogram_quantile(0.99, sum(rate(sharded_queue_enqueue_delay_bucket{name="status-reports"}[5m])) by (le, name)) |

TSB Status Updates Enqueue Delay 5m

Only applies when tsb --max-status-report-workers is > 1. The delay is milliseconds since a config status report started the enqueuing until the queue accepted(enqueued) the config status report.

The config status report queue has a fixed size of element in can't hold per bucket. If the enqueuing latency goes up, it means that there is contention in the queue and more elements cannot be placed until the queue releases spaces by consuming its enqueued elements.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

sharded_queue_enqueue_delay_bucket | name | sum(rate(sharded_queue_enqueue_delay_bucket{name="status-reports"}[5m])) by (le) |

TSB Status Updates Worker Delay 5m

Only applies when tsb --max-status-report-workers is > 1. The delay is milliseconds since a config status report is received and processed by the work queue.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

sharded_queue_work_delay_bucket | name | histogram_quantile(0.99, sum(rate(sharded_queue_work_delay_bucket{name="status-reports"}[5m])) by (le, name)) |

TSB Status Updates Worker Delay 5m

Only applies when tsb --max-status-report-workers is > 1. The delay is milliseconds since a config status report is received and processed by the work queue.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

sharded_queue_work_delay_bucket | name | sum(rate(sharded_queue_work_delay_bucket{name="status-reports"}[5m])) by (le) |

Cluster Status Update from XCP every 5m

Cluster status update messages received from XCP over a gRPC stream.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

grpc_client_msg_received_total | component grpc_method | sum(increase(grpc_client_msg_received_total{component="mpc", grpc_method="GetClusterState" }[5m])) or on() vector(0) |

Cluster updates from XCP processed every 5m

The number of cluster status updates received by the Management Plane Controller (MPC) from XCP that must be processed and sent to TSB.

XCP sends the cluster status updates (e.g. services deployed in the cluster) over a permanently connected gRPC stream to MPC. This metric shows the number of messages received and processed by MPC on that stream.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

permanent_stream_operation_total | error name | sum(increase(permanent_stream_operation_total{name="ClusterStateFromXCP", error="" }[5m])) or on() vector(0) |

permanent_stream_operation_total | error name | sum(increase(permanent_stream_operation_total{name="ClusterStateFromXCP", error!="" }[5m])) or on() vector(0) |

XCP cluster status updates Sent to TSB every 5m

This is the number of cluster status updates that are processed by the Management Plane Controller (MPC) to be sent to TSB.

MPC sends the cluster status updates over a gRPC stream that is permanently connected to TSB, and this metric shows the number of cluster updates that are processed by MPC and sent to TSB on that stream.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

permanent_stream_operation_total | error name | sum(increase(permanent_stream_operation_total{name="ClusterUpdates", error=""}[5m])) or on() vector(0) |

permanent_stream_operation_total | error name | sum(increase(permanent_stream_operation_total{name="ClusterUpdates", error!=""}[5m])) or on() vector(0) |

Cluster status updates to TSB stream connection attempts every 5m

The number of connection (and reconnection) attempts on the cluster status updates stream. MPC sends the cluster status updates over a permanently connected gRPC stream to TSB. This metric shows the number of connections and reconnections that happened on that stream.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

permanent_stream_connection_attempts_total | error name | sum(increase(permanent_stream_connection_attempts_total{name="ClusterUpdates", error=""}[5m])) or on() vector(0) |

permanent_stream_connection_attempts_total | error name | sum(increase(permanent_stream_connection_attempts_total{name="ClusterUpdates", error!=""}[5m])) or on() vector(0) |

Cluster updates from XCP stream connection attempts every 5m

The number of connection (and reconnection) attempts on the cluster status updates from XCP stream. XCP sends the cluster status updates over a permanently connected gRPC stream to MPC. This metric shows the number of connections and reconnections that happened on that stream.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

permanent_stream_connection_attempts_total | error name | sum(increase(permanent_stream_connection_attempts_total{name="ClusterStateFromXCP", error="" }[5m])) or on() vector(0) |

permanent_stream_connection_attempts_total | error name | sum(increase(permanent_stream_connection_attempts_total{name="ClusterStateFromXCP", error!="" }[5m])) or on() vector(0) |

GC Count by Component in Management Plane

| Metric Name | Labels | PromQL Expression |

|---|---|---|

go_gc_duration_seconds_count | component plane | sum(rate(go_gc_duration_seconds_count{component=~"tsb|mpc|xcp", plane="management"}[5m])) by (component) |

GC Duration by Component in Management Plane

| Metric Name | Labels | PromQL Expression |

|---|---|---|

go_gc_duration_seconds_count | component plane | sum(rate(go_gc_duration_seconds_sum{component= |

go_gc_duration_seconds_sum | component plane | sum(rate(go_gc_duration_seconds_sum{component= |

Heap Allocations by Component in Management Plane

| Metric Name | Labels | PromQL Expression |

|---|---|---|

go_memstats_heap_alloc_bytes | component plane | sum(max_over_time(go_memstats_heap_alloc_bytes{component=~"tsb|mpc|xcp", plane="management"}[5m])) by (component) |

Heap Objects by Component in Management Plane

| Metric Name | Labels | PromQL Expression |

|---|---|---|

go_memstats_heap_objects | component plane | sum(max_over_time(go_memstats_heap_objects{component=~"tsb|mpc|xcp", plane="management"}[5m])) by (component) |

Next GC Target by Component in Management Plane

The heap memory size during the next GC cycle. GC is used to guarantee that the value is no less than the value of

| Metric Name | Labels | PromQL Expression |

|---|---|---|

go_memstats_next_gc_bytes | component plane | sum(max_over_time(go_memstats_next_gc_bytes{component=~"tsb|mpc|xcp", plane="management"}[5m])) by (component) |

Heap Utilization Percentage by Component in Management Plane

| Metric Name | Labels | PromQL Expression |

|---|---|---|

go_memstats_heap_idle_bytes | component | ( sum(go_memstats_heap_inuse_bytes{component="xcp"}) by (component) / sum(go_memstats_heap_idle_bytes{component="xcp"} + go_memstats_heap_inuse_bytes{component="xcp"}) by (component) ) * 100 |

go_memstats_heap_idle_bytes | component | ( sum(go_memstats_heap_inuse_bytes{component="tsb"}) by (component) / sum(go_memstats_heap_idle_bytes{component="tsb"} + go_memstats_heap_inuse_bytes{component="tsb"}) by (component) ) * 100 |

go_memstats_heap_idle_bytes | component | ( sum(go_memstats_heap_inuse_bytes{component="mpc"}) by (component) / sum(go_memstats_heap_idle_bytes{component="mpc"} + go_memstats_heap_inuse_bytes{component="mpc"}) by (component) ) * 100 |

go_memstats_heap_inuse_bytes | component | ( sum(go_memstats_heap_inuse_bytes{component="xcp"}) by (component) / sum(go_memstats_heap_idle_bytes{component="xcp"} + go_memstats_heap_inuse_bytes{component="xcp"}) by (component) ) * 100 |

go_memstats_heap_inuse_bytes | component | ( sum(go_memstats_heap_inuse_bytes{component="tsb"}) by (component) / sum(go_memstats_heap_idle_bytes{component="tsb"} + go_memstats_heap_inuse_bytes{component="tsb"}) by (component) ) * 100 |

go_memstats_heap_inuse_bytes | component | ( sum(go_memstats_heap_inuse_bytes{component="mpc"}) by (component) / sum(go_memstats_heap_idle_bytes{component="mpc"} + go_memstats_heap_inuse_bytes{component="mpc"}) by (component) ) * 100 |

GC CPU Fraction by Component in Management Plane

| Metric Name | Labels | PromQL Expression |

|---|---|---|

go_gc_duration_seconds_sum | component plane | sum( rate(go_gc_duration_seconds_sum{component= |

process_cpu_seconds_total | component plane | sum( rate(go_gc_duration_seconds_sum{component= |

Goroutines by Component in Management Plane

| Metric Name | Labels | PromQL Expression |

|---|---|---|

go_goroutines | component plane | sum(max_over_time(go_goroutines{component=~"tsb|mpc|xcp", plane="management"}[5m])) by (component) |

Heap Sys by Component in Management Plane

| Metric Name | Labels | PromQL Expression |

|---|---|---|

go_memstats_heap_sys_bytes | component plane | sum(max_over_time(go_memstats_heap_sys_bytes{component=~"tsb|mpc|xcp", plane="management"}[5m])) by (component) |

gRPC Server Calls Started Rate

The rate of RPCs started on the server.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

grpc_server_started_total | component grpc_method | sum(rate(grpc_server_started_total{component="tsb", grpc_method=~"GetAllClusters|UpdateClusterState|GetAllConfigObjects"}[5m])) by (grpc_method, component) |

gRPC Server Handled Rate

The rate of RPCs completed on the server.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

grpc_server_handled_total | component grpc_method | sum(rate(grpc_server_handled_total{component="tsb", grpc_method=~"GetAllClusters|UpdateClusterState|GetAllConfigObjects"}[5m])) by (grpc_method, component) |

gRPC Client Calls Started Rate

The rate of the RPCs started on the client.

| Metric Name | Labels | PromQL Expression |

|---|---|---|

grpc_client_started_total | component | sum(rate(grpc_client_started_total{component="mpc"}[5m])) by (grpc_method, component) |